A/B testing newsletter issues

Kentico EMS required

Features described on this page require the Kentico EMS license.

When preparing a newsletter issue for marketing purposes, it can be difficult to predict exactly how subscribers will react to important elements, such as the e‑mail subject or the primary call to action. You may also want to find out which option out of several alternatives will have the most positive effect. Optimizing your newsletter issues via A/B testing provides a possible solution to these problems.

A/B testing allows you to create multiple different versions of each newsletter issue called variants. You then mail out the variants to a test group of subscribers, typically a portion of the newsletter’s full mailing list. The best version of the issue can be identified based on the tracking statistics measured for the test group, and then sent to the remainder of the subscribers. The winning variant can be either selected automatically by the system according to specified criteria or manually after evaluating the test result.

A/B testing works best for newsletters that have a large number of subscribers. With too small a testing group, the results may be heavily affected by random factors and will not be statistically significant.

Prerequisites

On-line marketing must be enabled for your website:

- Open the Settings application.

- Navigate to On-line marketing.

- Enable Enable on-line marketing.

- Click Save.

A/B testing variants of newsletter issues are evaluated based on the actions performed by recipients, so both types of E-mail tracking need to be enabled for tested newsletters:

- Open the Newsletters application.

- Edit the newsletters on the Configuration tab.

- Enable Track opened e-mails and Track clicked links.

- Click Save.

A/B testing is only supported for template-based (static) newsletters.

Creating A/B tests for newsletter issues

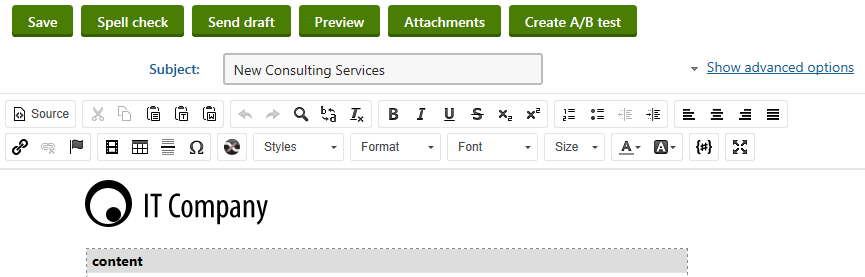

You can define A/B tests in the Newsletters application, either directly when creating new newsletter issues, or when editing existing issues on the Content tab.

For new issues, you need to Save the content of the e-mail message before you can create an A/B test.

Click Create A/B test to add the first testing variant for the issue.

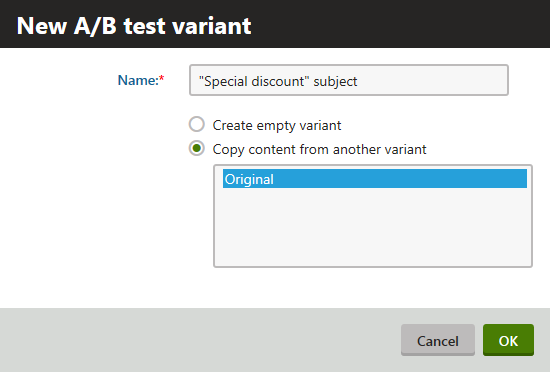

Type a Name for the variant.

- The name will be used to identify the issue variant while working with the A/B test.

Choose one of the following options to determine the initial content of the variant:

- Create empty variant - select this option to create the issue variant from scratch. The variant will use the main template set for the newsletter on the Configuration tab and the content of its editable regions will be empty.

- Copy content from another variant - if selected, the content of an existing variant (or the original issue) will be used as a starting point that you can modify as required. Choose the source from the list of issue variants. The variant will use the same template as the source issue and all editable region content will also be copied. This option makes it easy to create variants for testing small changes, such as a different e‑mail subject or text headline.

Click OK.

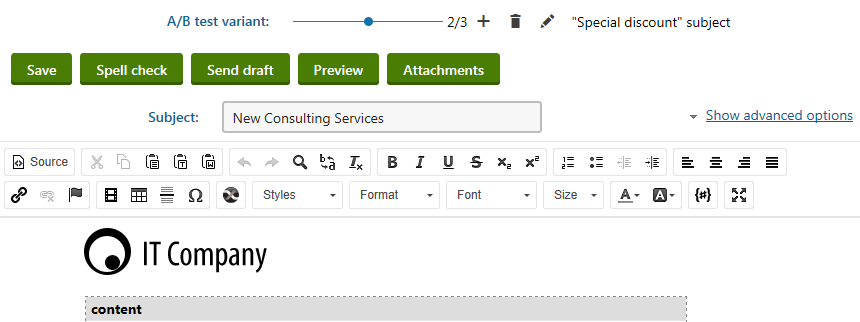

Now that you have defined an A/B testing variant for the issue, the content editing page shows a slider at the top. You can use the slider to switch between individual variants, including the original issue. The slider shows the name of the currently selected variant. You can manage the A/B test variants using the following buttons:

- Add variant - creates another variant of the issue. You can add any number of variants.

- Remove variant - deletes the variant currently selected through the slider.

- Edit properties - allows you to change the name of the currently selected variant. If required, you may also rename the original issue.

You can modify the settings and content of variants just like when editing standard issues. Each variant may have a different subject, issue template, editable region content etc. This allows you to test any variables that you need.

Sending A/B test issues

Once you define all of the issue’s variants, you need to configure how the test is sent out and evaluated. You can do this either in the send step of the new issue wizard or when editing an existing issue on the Send tab.

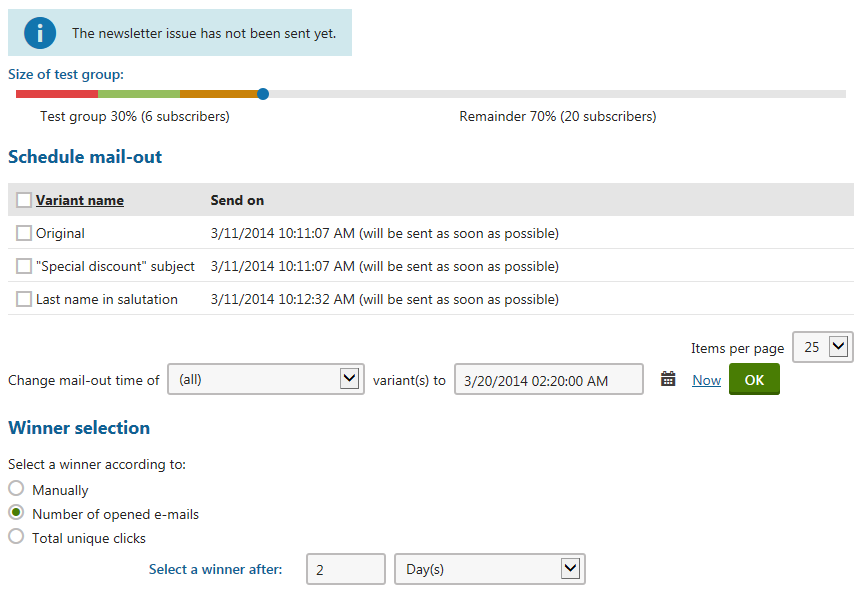

Define the size of the subscriber test group using the slider in the upper part of the page.

- By moving the slider’s handle, you can increase or decrease the number of subscribers that will receive the variants of the newsletter during the testing phase.

- The slider automatically balances the test group so that each variant is sent to the same amount of subscribers.

- The overall test group size is always a multiple of the total number of variants created for the issue.

- The remaining subscribers who are not part of the test group will receive the variant that achieves the best results (i.e. the winner) after the testing process is complete.

Using a full test group

You can set up a scenario where the test group includes 100% of all subscribers. In this case, the A/B test simply provides a way to evenly distribute different versions of the issue between the subscribers and the selection of the winner is only done for statistical purposes.

In the Schedule mail-out section below the slider, specify when to send out individual issue variants to the subscribers from the corresponding portion of the test group.

- To schedule the mail‑out, enter the required date and time into the field below the list (you can use the Calendar selector or the Now link) and click OK.

- You can either do this for all variants or only those selected in the list.

- If the mail-out time is the same for multiple variants, the system sends them with approximately 1 minute intervals between individual variants.

In the Winner selection section, select how the A/B test chooses the winning variant:

- Number of opened e-mails - the system automatically chooses the variant with the highest number of opened e-mails as the winner. This type of testing focuses on optimizing the first impression of the newsletter, i.e. the subject of the e-mails and the sender name or address, not the actual content.

- Total unique clicks - the system automatically chooses the winner according to the amount of link clicks measured for each variant. Each link placed in the issue’s content is only counted once per subscriber, even when clicked multiple times. This option is recommended if the primary goal of your newsletter is to encourage subscribers to follow the links provided in the issues.

- Manually - the A/B test does not select the winner automatically. Instead, the author of the issue (or other authorized users) can monitor the results of the test and choose the winning variant manually at any time.

When using an automatic selection option (one of the first two), set the duration of the testing period through the Select a winner after settings.

- This allows you to specify how long the system waits after the last variant is sent out.

- When the testing period is over, the test chooses the winner and mails it to the remaining subscribers.

Click Send (or Send and close in the new issue wizard).

- If you only wish to save the configuration of the A/B test without actually starting the mail-out, click Save (Save without sending) instead.

Evaluating A/B tests

The testing phase begins after the system sends out the first variant. If you need to make any changes to the configuration of the A/B test or the content of its variants, you can edit the issue.

On the Content tab, you may modify the variants that have not yet been mailed, but the slider actions are now disabled. It is no longer possible to add, remove or rename variants.

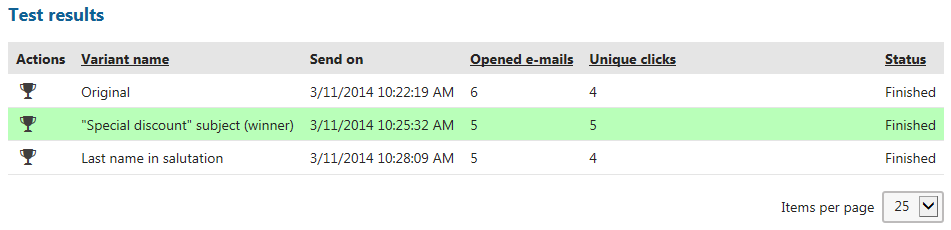

If you switch to the Send tab, the test group slider is now locked. However, you can view the e‑mail tracking data measured for individual variants in the Test results section. The current tracking statistics are shown for each variant, specifically the number of opened e‑mails and amount of unique link clicks performed by subscribers. By clicking on these numbers, you can open a dialog with the details of the corresponding statistic for the given variant. It is also possible to reschedule the sending of variants that have not been mailed yet using the selector and date-time field below the list.

You can change the winner selection criteria at any point while the testing is still in progress.

Click Select as winner to manually choose a winner (even when using automatic selection). This opens a confirmation dialog where you can schedule when the winning issue variant is sent to the remaining subscribers. If you specify a date in the future, you will still have the option of choosing a different winner during the interval before the mail-out.

Special cases with tied results

If a draw occurs at the end of the testing phase (i.e. the top value in the tested statistic is achieved by multiple issue variants), the test postpones the selection of the winner evaluates the variants again after one hour.

In certain situations, you may need to choose the winner manually even when using automatic selection. For example. if you are testing the number of opened e-mails and all subscribers in the test group view the received issue.

Once the test is concluded and the winner is decided, the given variant is highlighted by a green background. At this point, the system mails out the winning issue to the remaining subscribers who were not included in the test group. You cannot perform any actions with the test except for viewing the statistics of the variants.

You can monitor the overall statistics of A/B tested issues on the Issues tab of the newsletter, including the e-mails used to deliver the winning variant to the subscribers outside of the test group. When viewing the opened e-mail or clickthrough data in the detail dialogs, you may use the additional Variants filter to display either the total (all) values for the entire issue, or only those of specific variants. The statistics of the winning variant include both the corresponding portion of the test group and the remainder of the subscribers who received the issue after the completion of the testing phase.