Specialized SaaS deployment scenarios

Minimum Xperience by Kentico version

The minimum Xperience by Kentico version for new projects that can be deployed to the SaaS environment is 30.1.0. If you are using an earlier version, you need to update your project to the latest version before deploying it to the SaaS environment. Existing projects are not affected, but we recommend updating them to the latest version.

This page covers specialized SaaS deployment situations: deploying a large amount of data during initial deployments using custom restore and deploying emergency fixes. See Deploy to the SaaS environment for common deployment scenarios.

Deploy a large amount of data during initial deployments

During initial deployments (e.g., when upgrading to Xperience by Kentico), you can use custom restore to deploy a large amount of data without being restricted by the 2 GB deployment package limit.

Custom restore enables you to transfer the database and storage files directly to the selected environment. It is required to upload both the database and storage files. Custom restore does not provide a way to deploy application libraries, so it needs to be used in combination with a deployment package.

During the process, you first upload the data to automatically created containers in Azure Storage that are separate from your SaaS environments. When you apply the custom restore, all existing data in the target environment’s database and storage is overwritten with the uploaded data, and the target environment is unavailable while the restore itself runs.

The changes introduced by a custom restore are not propagated between environments with the deployment package. Transferring them to other environments requires a custom restore for each environment.

Perform a custom restore

Only Tenant administrator and DevOps roles can manage custom restore sessions (create and close sessions, revoke access) and restore to all environments. Developers can restore to non-production environments (QA, UAT) and access the restore containers.

To perform a custom restore:

Back up your data.

- Create a restore point for the target environment before starting.

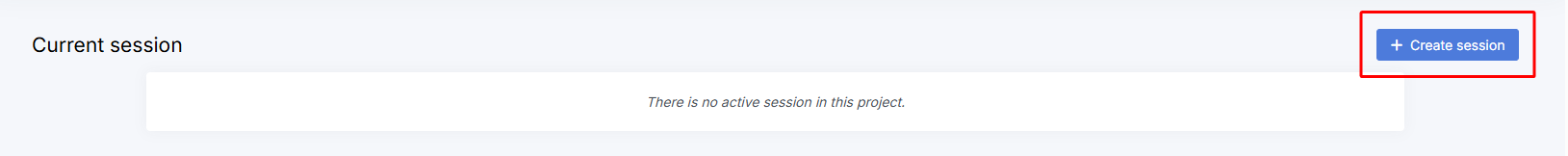

Create a restore session.

- Navigate to Data Management → Custom restore.

- Select Create session.

Get access to restore containers.

Select Get access.

If you want to restrict access to the restore containers, specify an allowlist by entering an IP address or an IP address range and confirm with Get access. Otherwise, select Get access without entering any values.

Copy both SAS URLs for the database and storage containers.

SAS URLs

You can’t retrieve the same SAS URLs again. However, you can get new SAS URLs with Get access. The previously existing SAS URLs are still valid. To revoke all current SAS URLs, select Revoke access under Current session.

Within a session, the SAS URL pairs provide access to the same containers.

Upload the database.

- You can use Azure Storage Explorer to connect to the container:

- In Azure Storage Explorer, connect to the restore Azure Storage (select the type Blob container or directory).

- Select the connection through Shared access signature URL (SAS).

- Enter the SAS URL for the database container and connect to the resource. The display name does not matter.

- Upload the database exported as a bacpac file to the database container.

Requirements:

- The file must be named

db.bacpac. - The database to be restored must have the same version as the one currently in the environment.

- The database upload is required even if you only intend to update storage files (and vice versa).

- You can use Azure Storage Explorer to connect to the container:

Upload storage files.

Connect to the storage container using the second SAS URL (as in the previous step).

Upload the files to be stored in Azure Blob Storage.

- For each container used in your Azure Blob Storage configuration, upload a folder with the same name. Within that container folder, recreate each path mapped to that container in

StorageInitializationModule.csand upload the corresponding contents into those locations.- For example, if

~/assets/contentitemsis mapped to a container, its contents go under<container-name>/assets/contentitems/. If you’re using the default configuration with the container nameddefault, the structure would bedefault/assets/….

- For example, if

- You can also obtain this structure by downloading a Storage and files export once you have deployed with this Blob storage configuration.

The names of files and folders uploaded to the storage container must be fully in lowercase.- Note that the uploaded files count toward the file storage limit, which you can view on the Dashboard.

- For each container used in your Azure Blob Storage configuration, upload a folder with the same name. Within that container folder, recreate each path mapped to that container in

Restore to an environment.

- Make sure you have a backup of the target environment. The custom restore process itself cannot be undone, but you can use restore points to revert the changes.

- Once you have uploaded both the database and the storage files to be restored, you can select Restore to environment under Current session.

- Select the target environment where the data should be restored. The environment will be unavailable during the restore process. The database in the target environment will be replaced with the uploaded one. All storage containers in the environment will be deleted and recreated based on the uploaded data.

- Select Create to start the custom restore process.

Tenant administrator and DevOps roles can restore to any environment. Developers can restore only to non-production environments (QA, UAT).Close the session.

- You can see the restore in progress under Active restores. Once finished, it will appear under Last completed restores.

- When the restore process is complete and you no longer need to keep the data (for example, to restore it to another environment), Close the session.

Each session expires after a week. Once a session has expired, you can no longer upload data to the containers, but you can still restore the data you uploaded previously (up to 180 days from the session creation). Only one restore session can be open at a time.

Deploy emergency fixes to the SaaS environment

Emergency deployments allow users in DevOps Engineer or Tenant Administrator roles to bypass the standard deployment flow and push critical fixes or version updates directly to environments other than QA.

The emergency deployment process is suitable for the following situations:

- The Xperience version deployed in your PROD environment is, for example, 30.0.0. In the QA environment, the deployed version is 30.1.0 with some new features you are currently testing. In version 30.2.0, Kentico releases a security advisory and you want to immediately update to this version. However, your QA environment is “blocked” by the tested feature.

- You have recently released a new functionality that contains an issues that impedes the usability of your application. The fix for this issue is simple, but you need to promote it to the PROD environment as soon as possible. However, your QA environment is “blocked” by a new feature that is already tested in this environment.

Handle deployments to Production with care

Do not use emergency deployments for fixes if you are not absolutely sure that you can deploy these changes to the PROD environment without first testing them on any other SaaS environment. If you have any environment other than QA and PROD available (e.g., UAT, STG), it is safer to deploy directly to this environment, test the fix, and then deploy to PROD from there.

Product versions across different environments

Keep in mind that downgrading a product version is not possible. Consider the following setup:

- Product version 30.1.0 is deployed in the QA environment.

- Product version 30.2.0 is deployed in the PROD environment using the emergency deployment.

It is not currently possible to promote the package from the QA environment to the PROD environment. You need to first update the package in QA to an equal or newer version than PROD (30.2.0 or newer in this example).

Deploy emergency fixes from Xperience Portal

Notes

- This action is available only for users in DevOps Engineer or Tenant Administrator roles.

- This action may cause downtime of the target environment unless the deployment package is marked as zero-downtime ready and the target environment already contains zero-downtime ready code.

- Create the emergency deployment package. Consider using the

-ZeroDowntimeSupportEnabledparameter if your application supports it to minimize deployment downtime. - Upload the package from Xperience Portal. In the Deployments application, select the Upload package button under the desired environment.

Deploy emergency fixes using Xperience Portal API

Notes

- This action is available only for users in DevOps Engineer or Tenant Administrator roles.

- This action may cause downtime of the target environment.

- Create the emergency deployment package.

- If you don’t have one already, create a Personal access token with the required permissions for respective environments: Deploy to production environments or Deploy to non-production environments.

- Upload a package using Xperience Portal API. When sending the POST request to the deployment API endpoint, modify or add the last path segment and set the value to the desired environment (e.g.,

prodfor Production orstgfor Staging).

# Due to a PowerShell issue, we recommended disabling the progress bar to boost the performance significantly.

# See https://github.com/PowerShell/PowerShell/issues/2138 for more information.

# Disable the progress bar

$ProgressPreference = 'SilentlyContinue'

# Upload the deployment package

$headers = @{ Authorization = "Bearer <PERSONAL_ACCESS_TOKEN_WITH_REQUIRED_PERMISSION>" }

Invoke-RestMethod -Uri https://xperience-portal.com/api/deployment/upload/<PROJECT_GUID>/prod -Method Post -InFile <FILE_PATH> -ContentType "application/zip" -Headers $headers

# Enable the progress bar

$ProgressPreference = 'Continue'